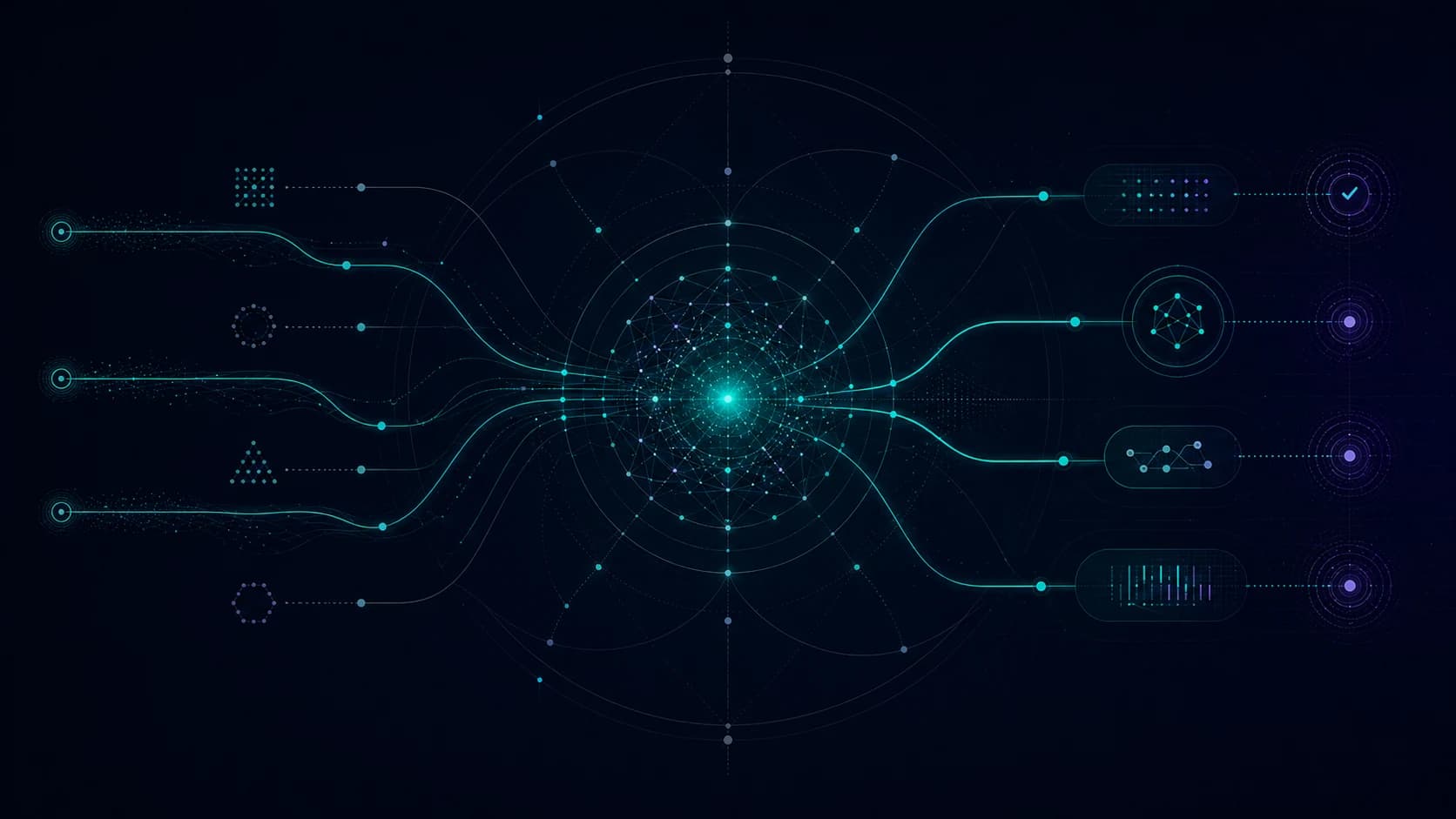

GPT-5.5 raises the ceiling. It does not remove the need for local inference. In fact, smarter frontier models make routing more valuable because the gap between cheap tasks and hard tasks gets clearer.

Local models are still useful when the task is bounded, the input is predictable, and the output can be validated.

Where local still works

| Task | Why local can fit |

|---|---|

| classification | small label sets are easy to test |

| extraction | schemas constrain the answer |

| deduping | deterministic checks can verify output |

| draft cleanup | low-risk language edits |

| private notes | data stays on the machine |

| batch tagging | cost stays predictable |

Where frontier still wins

Use a frontier model when the task needs judgment across messy context.

- ambiguous planning

- long codebase work

- difficult research synthesis

- tool-heavy execution

- high-stakes review

- multimodal reasoning

- customer-facing decisions

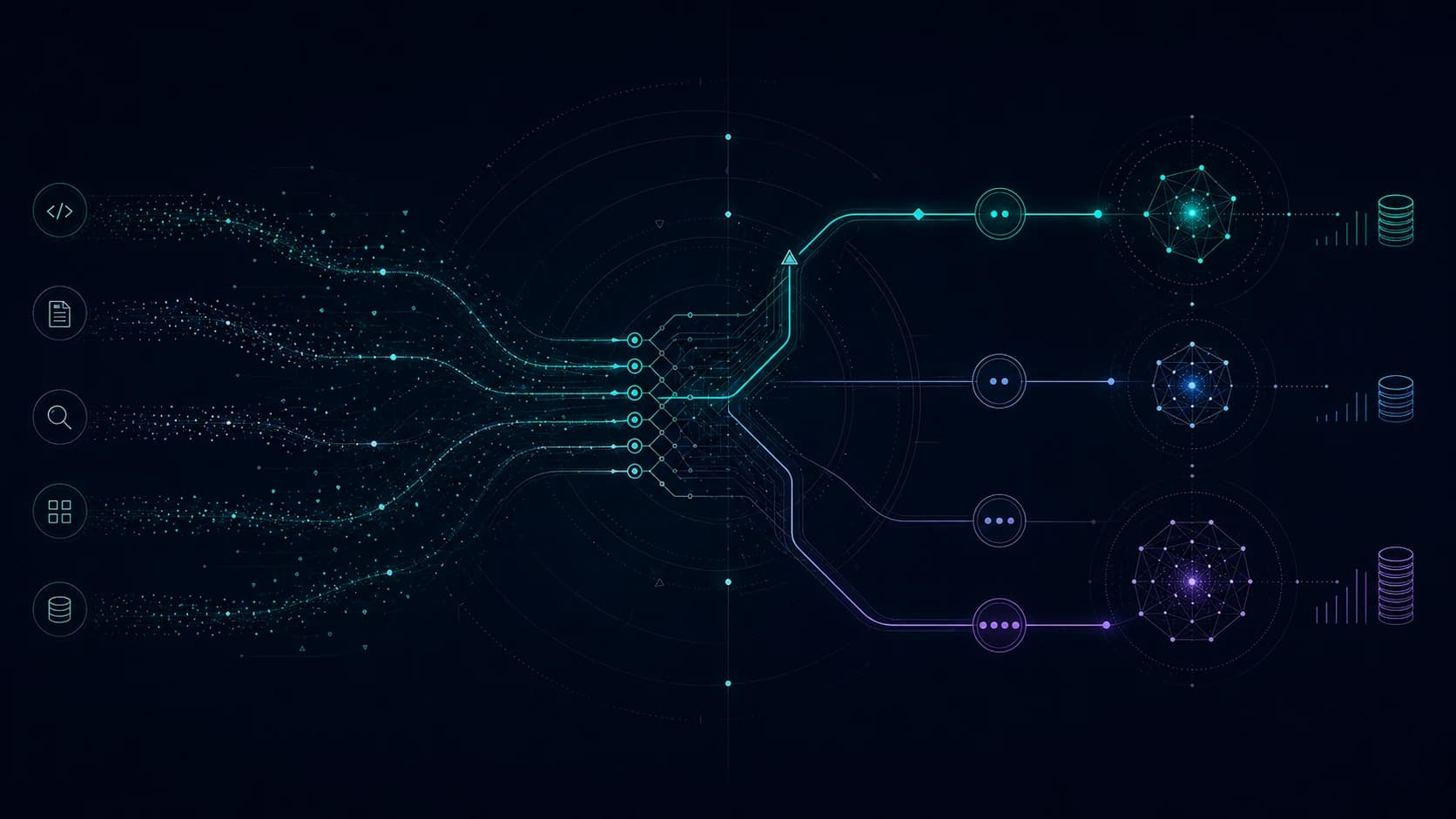

The hybrid pattern

A good agent does not need one model. It needs the right model at each step.

| Stage | Model choice |

|---|---|

| intake | local or small model |

| enrichment | small model plus tools |

| planning | frontier model |

| execution | frontier model with budget |

| validation | deterministic checks plus reviewer model |

| receipt | template or small model |

Why this matters for SMBs

Small businesses are cost-sensitive. They also cannot afford bad automation. The answer is a route, not a religion.

Run local where it is boring and safe. Spend frontier tokens where ambiguity creates cost. Log the difference so the client can see why the system made the choice.