Most AI projects start with access. Give the team a model, add a chat box, connect a few tools. That can help individuals move faster, but it rarely changes how the business runs.

McKinsey's 2025 State of AI survey points to the same pattern: stronger AI performers are more likely to redesign workflows and scale agents into real operating loops. The work loop decides whether the agent matters.

Access versus workflow

| Access project | Workflow project |

|---|---|

| buys a subscription | maps the handoff |

| asks people to try prompts | names the failure point |

| measures usage | measures accepted output |

| depends on enthusiasm | changes the operating cadence |

| creates scattered wins | creates a repeatable system |

Access is still useful. It gives the team language, muscle memory, and faster drafts. It becomes business value when the workflow changes around it.

Pick the right workflow

Do not start with the flashiest task. Start with the one that has clean boundaries.

That scorecard usually points to intake, triage, drafting, research, QA, reporting, or handoff work. Those are ordinary loops where a small business feels friction every week.

The 30-day test

Pick one workflow with a clean input and a clean output.

Examples:

- new lead intake to qualified call notes

- meeting transcript to task list

- customer email to draft response

- Google Business Profile review to reply draft

- service page idea to sourced outline

- invoice PDF to accounting-ready fields

Then define the run.

What to measure

Usage is weak evidence. Measure the business surface.

- minutes saved per accepted output

- rejected output rate

- human correction rate

- time to customer response

- missed handoffs

- cost per accepted artifact

- customer satisfaction where available

Add one more metric: the number of times the workflow falls back to the old process. Fallbacks show where the operating design is still weak.

What this means for Om Concepts clients

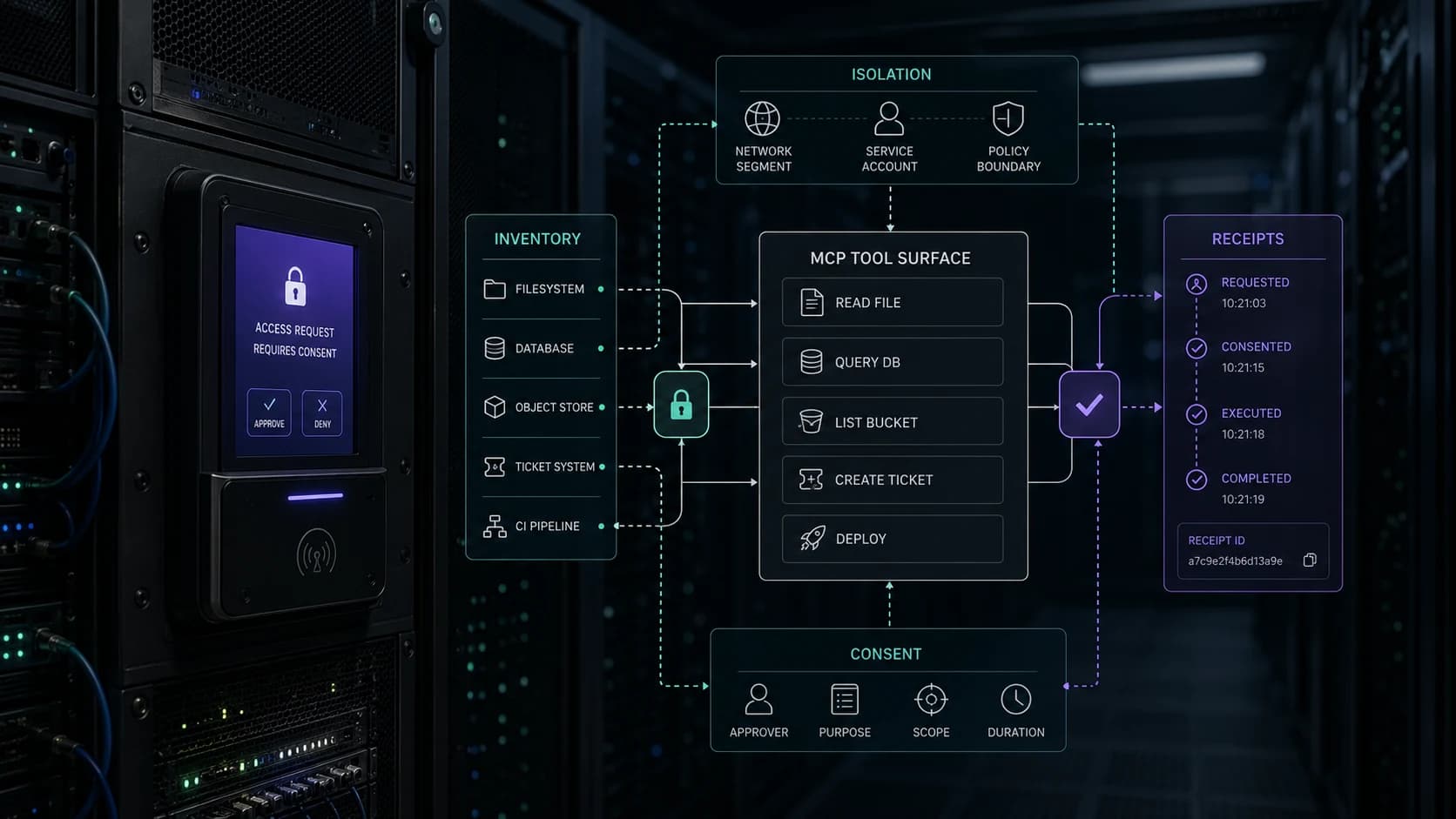

Start narrow. A good first agent should feel boring after a month because it does the same bounded job every time. Once the receipt trail proves the loop works, expand the scope.

That is how the business gets better without betting the whole operation on a demo.

Related agent notes

- Where small businesses lose leads

- Service pages that help buyers decide

- Model vs harness vs environment

- Receipts prove each run

- Ops and build services

- AI services